|

April 7, 2022: Enabling Personalized Interventions (EPI)

Consortium Meeting and contributions at ICT.Open.

|

|

|

Consortium meeting:

|

| When |

Thursday, April 7th 2022

|

| Where |

@ ICT.OPEN in the Rai, Amsterdam

|

Abstract

|

Consortium and dissemination meeting

|

| Agenda |

| 11:30 |

Start Quarterly Consortium Meeting

|

_

|

|

|

|

Eline van Dulm

|

Welcome, overview of EPI

|

|

slides |

| 11:40 |

Presentations PhD students |

|

|

|

_

|

Rosanne Turner

|

RQ1-2: Real-time evidence collection in data streams

|

|

slides

|

|

|

Saba Amiri |

RQ4: Private Federated Machine Learning The EPI Project. |

|

slides |

|

|

Milen Girma Kebede

|

RQ5: Automating regulatory constraints and data governance in healthcare

|

|

slides |

|

|

Jamila Alsayed Kassem

|

RQ6: The EPI Framework: A dynamic infrastructure to support healthcare use cases. |

|

slides |

|

|

Tim Muller

|

Brane status update

|

|

slides |

|

|

Corinne Allaart |

RQ3: Vertically partitioned machine learning for prediction of cerebrovascular accident (CVA) rehabilitation. |

|

slides |

| 12:40 |

EPI Proof Of Concept |

|

|

|

|

Marc van Meel |

EPI PoC – collaboration UMCU & St. Antonius |

|

|

| 12:55 |

Any other business |

|

|

| 13:00 |

Closure |

|

|

|

|

|

Presentations presented at ICT.Open

|

Abstract: Towards a policy enforcement infrastructure using eFLINT (Slides) by Lu-Chi Liu.

Organizations are required to be compliant to national and international

privacy regulations to protect data subject's personal data. Therefore,

we need authorisation systems that can capture and enforce privacy

regulations. This work focuses on the design and implementation of an

authorisation system that can enforce conditions, authorisations and

obligations from legal norms. In previous work, we employ eFLINT, a

domain specific language, to capture policies extracted from a

regulatory document that governs members of the SIOPE DIPG/DMG Network, a

healthcare use-case. The language allows for the reuse of

specifications which is shown by reusing the GDPR and DIPG regulatory

document interchangeably. Additionally, we generate system level eFLINT

policies, such as read and write policies, from social policies.

We are currently working on a prototype for the DIPG Registry. The

prototype is designed using two approaches, either by implementing the

eFLINT reasoner as a Policy Administration Point(PAP) or as a Policy

Decision Point(PDP). In the first approach, eFLINT can be used to create

policies and generate enforceable XACML policies. XACML has several

limitations when it comes to specifying rules from legal norms. Using

eFLINT as a PAP can mitigate these issues as an extension to the XACML

architecture. In the second approach, eFLINT reasoner can be used as

both the PAP and PDP. When used as PDP, the Policy Enforcement Point

(PEP) signals every access request or system event to the eFLINT

reasoner. The eFLINT reasoner evaluates requests or events against

deployed eFLINT rules and makes access decisions.

|

|

Posters presented at ICT.Open

EPI Framework: Approach for traffic redirection through containerised network functions

Jamila Alsayed Kassem

On the road towards personalised medicine, secure

data-sharing is an essential prerequisite to enable healthcare use-cases

(e.g. training and sharing machine learning models, wearables

data-streaming, etc.). On the other hand, working in silos is still

dominating today’s health data usage. A significant challenge to address

here, is to set up a collaborative data-sharing environment that will

support the requested application while also ensur- ing uncompromised

security across communicating nodes. We need a framework that can adapt

the underlying infrastructure taking into account norms and policy

agreements, requested application workflow, and network and security

policies. The framework should process and map those requirements into

setup actions. On a low packet level, the framework should be able to

enforce the setup route via intercepting and redirecting traffic.

extended abstract here

|

|

|

|

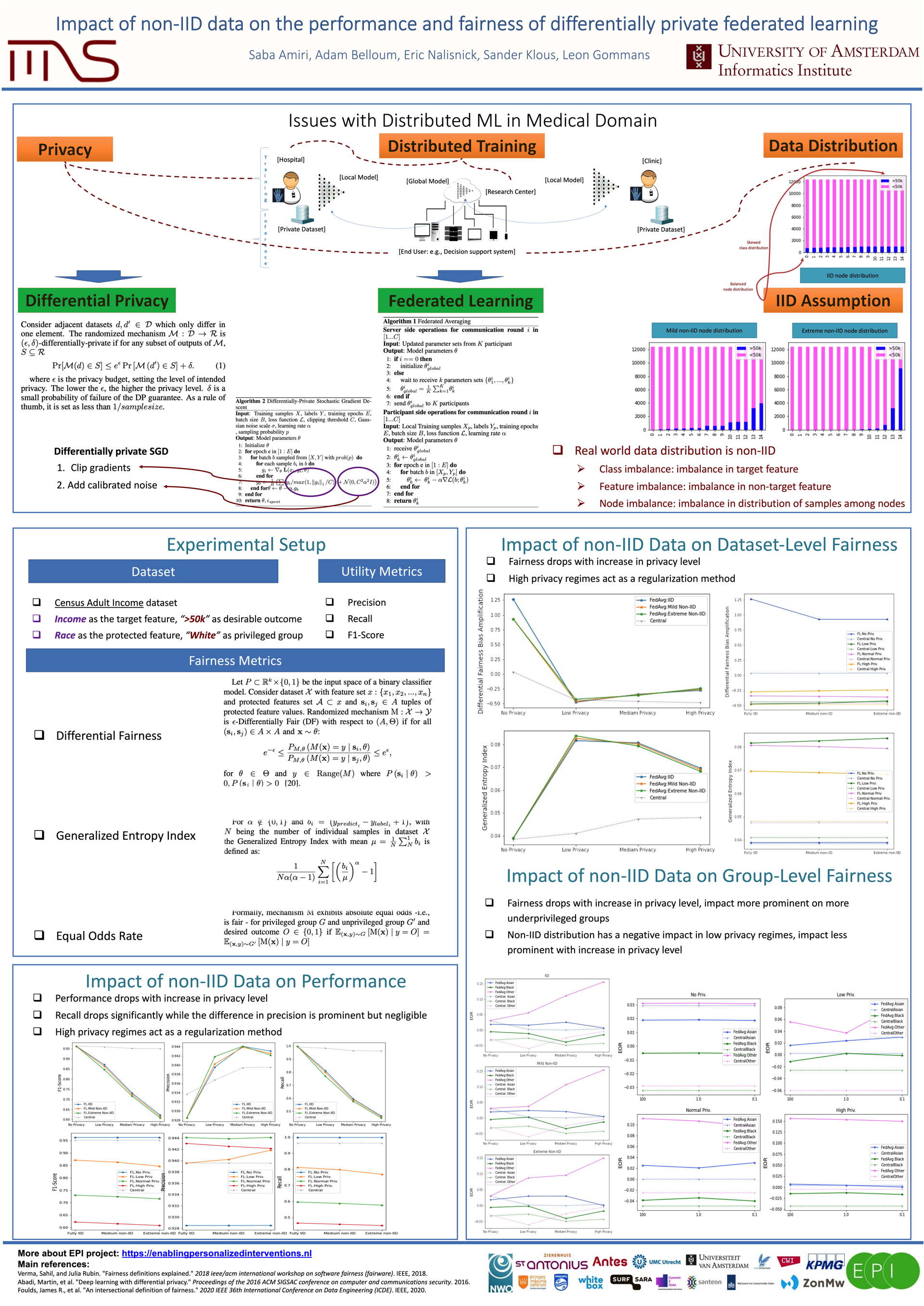

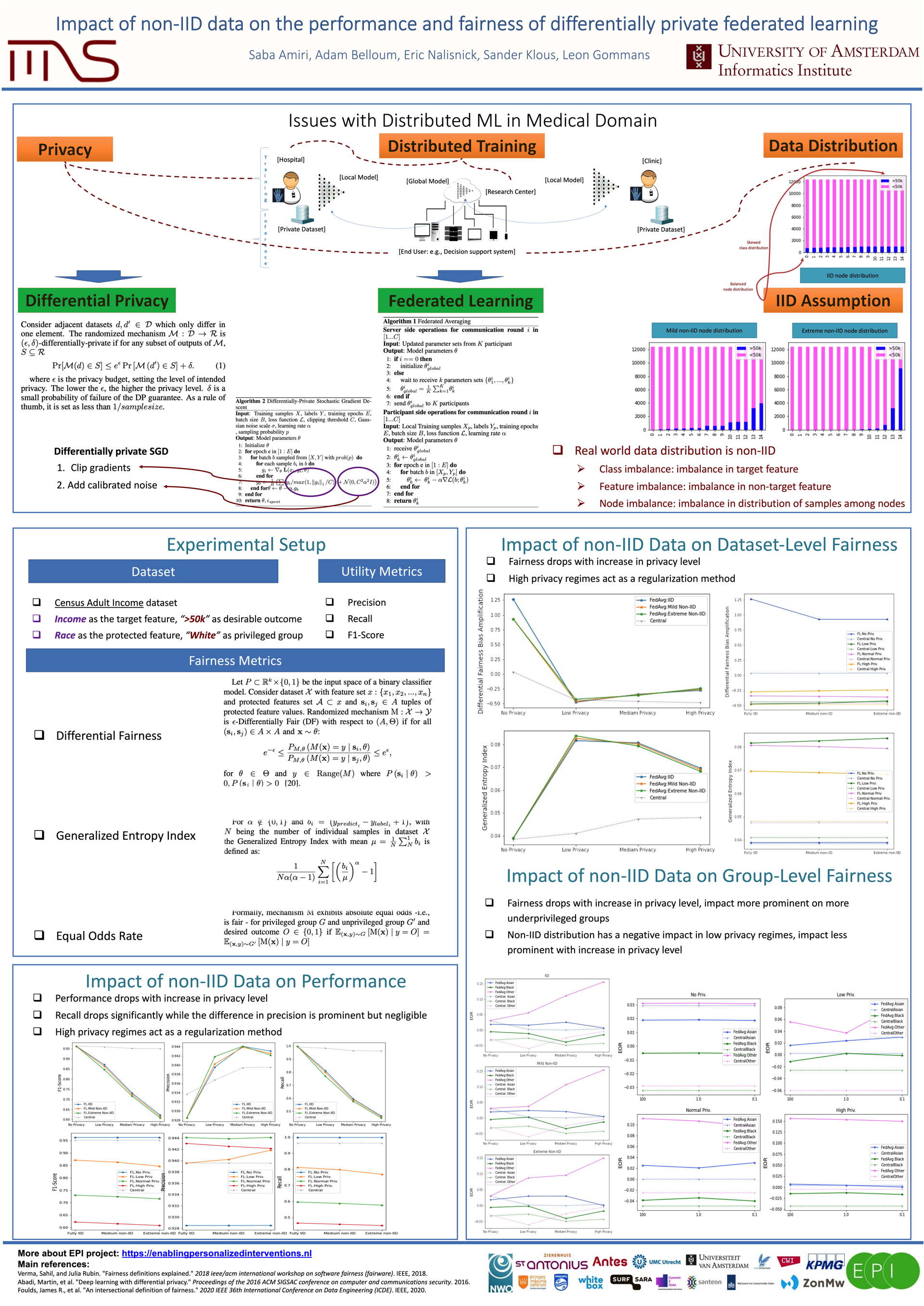

Impact of non-lID data on the performance and fairness of deferentially private federated learning.

Saba Amiri

Federated Learning enables distributed data holders to train a shared

machine learning model on their collective data. It provides some

measure of privacy by negating the need for the participants to share

their private data, but still has been shown in the literature to be

vulnerable to adversarial attacks. Differential Privacy has been shown

to provide rigorous guarantees and sufficient protection against

different kinds of adversarial attacks and has been widely employed in

recent years to perform privacy preserving machine learning. One common

trait in many of recent methods on federated learning and federated

differentially private learning is the assumption of IID data, which in

real world scenarios most certainly does not hold true. In this work, we

perform comprehensive empirical investigation on the effect of non-IID

data on federated, differentially private, deep learning. We show the

non IID data to have a negative impact on both performance and fairness

of the trained model and discuss the trade off between privacy, utility

and fairness. Our results highlight the limits of common federated

learning algorithms in a differentially private setting to provide

robust, reliable results across underrepresented groups.

|

|

|

|